12 Planning & RL for Transformers (Advanced Topic)

This Module

Last Module: Deep learning & Tree Search

This Module: Planning & RL for Transformers

What is covered? How to implement planning and RL on GPU/TPUs.

Introduction to Attention-based Transformers

Attention-based Transformers & Implicit Planning

Attention-based transformers can simulate multiple futures inside a hidden state.

Self-attention makes lookahead possible:

- Attention learns which futures matter

- Transformers can simulate “multiple futures” inside the hidden state

Implicit planning is an amortised form of planning, i.e. pay the cost of planning upfront during training, so that at test time the system can act without running a full planner or search each time.

- Attention-based transformers have the capacity to use neural network model’s implicit planning capacity

The Elements of Attention-based Transformers

- Autoregression & joint distribution factorisation

- Masked latent transformers

- Attention-based transformers

- Causal masking

1. Autoregression & joint distribution factorisation

Autoregression models a sequence by predicting each element from all previous elements, i.e. \(x_t = f(x_{1:t-1}) + \epsilon\) where \(\epsilon\) is the noise or uncertainty. It factorises a joint distribution as follows:

\[ p(x_{1:T}) = \prod_{t=1}^{T} p(x_t \mid x_{1:t-1}) \]

where \(p(x_{1:T})\) is the probability of seeing the entire sequence.

Used in time series, sequence modelling, and autoregressive transformers.

At inference time, predictions are fed back step-by-step to generate sequences.

To enforce causal structure, transformers use masking (next step).

2. Causal latent transformers

A causal latent transformer is a transformer that models sequences of latent states, using attention masks to enforce causality or planning structure, just like in autoregressive language models.

- It is a transformer that predicts (or refines) latent variables in a sequence, but only using allowed past or partial information, enforced through a mask.

Causal latent transformers appear in world-model RL, video generation, and planning-as-generation frameworks (implicit planning in neural networks).

- They are increasingly used as a replacement for recurrent neural network (RNN) and latent dynamics models, such as RSSM used in Dreamer-like systems (Note that Dreamer or recursive approaches are not covered in this Module).

3. Attention-based transformers

Transformers model sequences using self-attention, where each token computes weighted interactions with other tokens.

Key components:

Token embeddings and positional encodings

Self-attention:

\[ \mathrm{Attn}(Q, K, V) = \mathrm{softmax}\!\left( \frac{QK^\top}{\sqrt{d_k}} \right) V \]

where \(Q=\mathit{queries}\), \(K=\mathit{keys}\), \(V=\mathit{values}\) and \(d_k\) is the dimension of the key vectors (layers of the network) and \(\top\) corresponds to matrix transpose.

- Multi-head attention, feed forward layers, residual connections

4. Causal masking

Causal masking is used in autoregressive transformers:

Each token attends only to past tokens

Enforces the autoregressive condition

\[ x_t \sim p(x_{t+1} \mid x_{1:t-1}) \]

where \(\sim\) means sampled from.

This condition means that when predicting the next token, the model is only allowed to use earlier tokens, not future ones.

This masked attention mechanism directly carries over to masked latent transformers used in world-model RL.

Masked latent transformers & languages

The original Transformer was introduced as an attention-only sequence model, initially for natural language translation

- but its broader importance is that it has became a general computational approach for modelling structured representations.

- Masked latent transformers have been shown to be Turing complete.

Importantly, transformers have shown that multiple languages can be treated as sequences or structured token streams

- For example English (or any language), Python, Markdown/LaTeX (these slides), mathematical proofs, SQL, even multi-modal representations.

Visualising attention-based transformers

A useful visualisation approach, for understanding attention-based transformers is as follows:

Horizontal Axis (Tokens/Sequence): Each position corresponds to a specific token (word or sub-word) in your input.

Vertical Axis (Layers/Depth): As you move “up” the network layers, the model is building more abstract, contextual meanings. The bottom layers might focus on simple syntax, while higher layers handle complex relationships and intent.

Attention Intersections: The “links” connecting horizontal tokens across the vertical layers represent the Attention Weights, showing which other words the model is looking at to understand the current word.

Visualising attention-based transformers (continued)

This 3Blue1Brown video demonstrates the horizontal (tokens) / vertical (layers) visualisation.

Example: Masked latent transformers in LLMs

For large language models (LLMs) in natural language processing

Tokens = words

Mask = causal mask (can’t see the future)

In masked latent transformers:

Tokens = latent states \(z_t\) (learned hidden representations)

Mask = ensures correct temporal, causal, or planning structure

Example: LLMs for PDDL

Pretrained LLMs can also harness PDDL for generalised planning by being prompted with a PDDL domain and a small number of training problem instances.

A solution to generalised planning finds a reusable policy that solves many related problem instances, not just one specific initial state.

GPT-4 is used to synthesise and output a Python generalised planner, the program is tested and if it fails, GPT-4 is re-prompted with debugging feedback.

GPT-4 outperforms one of the leading domain-independent classical planner Fast Downward on generalised planning on benchmarks

GPT-4 is shown to be 1-2 orders of magnitude faster during inference but does not quite match coverage for benchmarks (misses a few). Although subsequent models are likely to improve this.

Silver et al. (2024) Generalized planning in PDDL domains with pretrained large language models https://ojs.aaai.org/index.php/AAAI/article/view/30006

Serialization of Reasoning: Chain of Thought

Serialization of Chain of Thought Reasoning

Reasoning in attention-based transformers is implicit because reasoning happens inside a serialized, single-token stream

- Reasoning tokens to represent a reasoning process capturing chain of thought reasoning steps

- Self-Reflection & Correction: Serialization facilitates self-reflection via specialised tokens (e.g., “wait,” “but”) which act as triggers for error-checking and hypothesis reassessment.

- Computational State: Tokens serve as a persistent “scratchpad,” allowing the model to offload intermediate results and extend its computational budget.

- Backtracking: Enables the model to autonomously identify and pivot away from incorrect logical branches without external reward models.

Example: DeepSeek-R1 (Mathematics)

1. Initial Logic & Calculation

The model begins solving a geometry puzzle by mapping variables.

Reasoning Token Stream

“To find the area, I first need the radius. The circumference is \(20\pi\), so \(2\pi r = 20\pi\), which means \(r = 10\). Now, the area is \(\pi r^2\), so \(10 \times 10 = 100\)…”

2. Self-Correction

A trigger word (“Wait”) signals model has detected a constraint violation.

Reasoning Token Stream (continued)

“…Wait. I misread the prompt. The \(20\pi\) was the area of a different circle, not the circumference. Let me re-evaluate.”

3. Alternative Strategy Exploration

The model discards the old path and restarts with the correct data.

Reasoning Token Stream (continued)

“…If the area is \(20\pi\), then \(\pi r_1^2 = 20\pi\), so \(r_1 = \sqrt{20}\). The prompt says the second radius is double the first…”

4. Final Verification

Before outputting, the model checks for internal consistency.

Reasoning Token Stream (continued)

“…So \(r_2 = 2\sqrt{20}\). Area = \(\pi(2\sqrt{20})^2 = 80\pi\). Does this make sense? Yes, \(80\pi\) is \(4\times\) the original area, matching the square of the radius doubling. Final Answer: \(80\pi\).”

- GRPO: Shao et al. (2024), DeepSeekMath arXiv:2402.03300

- DeepSeek-R1 Guo et al. (2025) DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning, Nature https://www.nature.com/articles/s41586-025-09422-z

Example: GPT o1, Claude 3.7 Sonnet & DeepSeek R1

GPT-o1 and subsequent models utilise chain of thought as mechanisms within reasoning.

- o1 introduced chain of thought style reasoning at inference in 2024, which enables training using RL for teaching the model to reason before answering

Claude 3.7 Sonnet introduced chain of thought reasoning in 2025.

DeepSeek-R1 utilises chain of thought

- R1’s utilised Group Relative Policy Optimisation (GRPO), a memory efficient fine tuning technique harnessing chain of thought at training and inference (covered later in this Module).

Example: Gemini Deep Think

Gemini Deep Think is a reasoning mode designed for hard problems in mathematics, coding, planning, and science.

Rather than producing one immediate answer, it uses:

Extended inference-time compute

More “thinking time” before answering.Parallel thinking

Multiple hypotheses or solution paths are explored and compared.Critique and revision

Candidate answers can be checked, refined, or rejected.RL-shaped reasoning behaviour

Google describes novel reinforcement learning techniques that encourage the model to use longer, multi-step reasoning paths.

Example: Mathematics Gold Medal (Gemini Deep Think)

DeemMind’s Gemini Deep Think Model wins Gold Medal at International Mathematical Olympiad (IMO)

Example: Gemini Deep Think (RL analogy)

Deep Think can be viewed as moving from answer prediction toward policy improvement over reasoning traces.

| RL concept | Deep Think analogy |

|---|---|

| State | Current problem + partial reasoning context |

| Action | Generate, branch, critique, revise, verify |

| Reward | Correctness, coherence, problem-solving success |

| Policy | Model’s learned strategy for choosing reasoning steps |

| Search | Parallel exploration of possible solution paths |

Example: Chain of thought in Anthropic’s Mythos

Anthropic’s Mythos model utilises human supervised chain of thought

Mythos is useful for penetration testing in cyber security

Utilises human supervised reasoning traces from chains of thought as a training target

Human supervision steers the model toward which vulnerabilities would be genuinely serious, dangerous bug classes, and potential security flaws

The model is trained to learn meaningful exploit hypotheses

Classical planning was originally utilised for penetration testing, through the development of PDDL represented attack models.

Faithfulness: Human Supervised Chain of Thought

Human supervised chain of thought models can create a safety challenge, as the model is trained to generate reasoning traces that humans expect

The risk is that the model learns to produce reasoning that looks acceptable rather than reasoning that is fully faithful

This can lead to what appears from a human perspective to be deceptive behaviour by the model, even though it makes sense from the attention-based transformer’s loss perspective.

Faithfulness: Open Problem in LLMs

Faithfulness of chain-of-thought reasoning under human supervision remains an unresolved problem

- Most LLMs do not directly train on human-labelled reasoning traces as ground-truth targets (or do so only in limited ways)

There is the key challenge:

Performance: reasoning-trace supervision can improve capability (e.g. decomposition, verification, structure)

Faithfulness: the trace may not reflect the model’s true internal reasoning

A reasoning trace can be, useful, plausible and reward-optimised without being causally faithful, i.e. Improving reasoning \(\neq\) ensuring faithful explanations

Example: Edge Computing (Gemma 4: Open Multimodal Model)

DeepMind released Gemma 4 on 2 April, 2026.

Performance: The 31B model acts as a “server-grade” model for local use, while smaller variants (2B/4B) are optimised for mobile, edge and on-device.

Agentic Capabilities: Built for multi-step planning, autonomous actions, and tool use.

Multimodal: Natively supports text, images, and audio, along with dynamic vision resolution.

Large Context: Supports up to 256,000 token context windows

Open-Source: Released under the Apache 2.0 licence

Knowledge distillation is a key element in training Gemma 4.

Agentic (Multi-Agent) Transformers

Major paradigms

Major paradigms are emerging which reflect a convergence of RL with autoregression and transformer models.

Multi Actor-Critic Transformers (online, no dynamics).

World-Model RL (planning, includes dynamics)

Vision (multi-modal)

Agentic (roles)

Example: Actor-Critic Transformers

Actor-critic transformers are RL agents that use transformer architectures to parameterise

- the actor, critic, or both,

- while still relying on Bellman equations and policy gradients for learning.

Actor-Critic Transformers essentially outperform Long Short-Term Memory (LSTMs) networks in long-horizon Partially Obervable Markov Decision Processes (POMDPs)

Three Layer Agent Stack

| Layer | Role (e.g. of an Agent or Robot) | Typical tools |

|---|---|---|

| Cognition / Reasoning | Query answering, Programming, multi-step thinking & planning: goal decomposition, tool selection, self-reflection, backtracking & correction, safety checks etc. | LLM with serialized Chain of Thought (GPT, Gemini, Claude, DeepSeek - Thinking, Programming & Operator modes; OpenClaw, MiniMax, etc.) |

| Semantic Policy (Vision–Language–Action) | Grounds instructions & scene into actionable subgoals / waypoints | Vision-Language-Action (VLA) Transformers (RT-X / RT-2-X) |

| Control / Dynamics | Execute precise motions, stabilize, react to dynamic feedback | Generative Model-based RL (STORM) |

Agent decomposition: Serialization

Agent decomposition can itself be learned through chain of thought reasoning

Attention-based transformers can infer useful sub-agents, subgoals, or roles from by serialization of context in token stream

- Decomposes a difficult task into subproblems

- Assigns roles, tools, or expertise to sub-agents

- Coordinate their interaction through shared context

- Learn useful decompositions directly from experience or data

In this sense, agentic structure need not be engineered entirely by hand; it can be learned from human data, experience or self-supervision (simulation).

Example: OpenAI’s Codex Command Line Interface (CLI)

OpenAI’s Codex CLI serves as a primary example of how Chain-of-Thought (CoT) prompts can naturally transition into Agent Decomposition for software tasks.

- CLI Workflow: By interfacing via the command line, we treat the LLM as a “Manager Agent” that breaks down high-level requirements into executable sub-tasks.

Architectural Training:

Prompting by Example: Use few-shot prompting \(+\) examples of successful system architectures to “train” the model on how to delegate.

Reasoning-to-Execution: CoT allows the agent to think (e.g., “I need a database schema first, then the API agents”) before the CLI executes the file creation.

Feedback Loop:

Self-Correction: The agent can inspect CLI error outputs to refine its decomposition logic in real-time.

Modularity: High-level CoT ensures each module is built in isolation, mimicking a multi-agent environment within a single interface.

“The conversation with the CLI essentially orchestrates a system of sub-agents directed by a serialised chain of thought reasoning chain.”

Agent decomposition challenge: Multi-Agent Systems

The decomposition of agents into multiple sub-agents, together with their respective roles, coordination mechanisms, and communication protocols remains an important challenge in the agentic approach.

Research and development into multi-agent systems has a long and fertile history in artificial intelligence

- There are many solutions to agentic problems in the existing literature.

Example: OpenClaw: agent RL from live interaction

OpenClaw is a framework for training LLM-based agents, such as Codex CLI/Claude, online, from normal usage.

Core idea: after each action, the agent observes the next state:

- user reply

- tool output

- terminal result

- GUI change

These next states are treated as RL feedback signals.

OpenClaw: OpenClaw-RL

OpenClaw-RL allows for agents to learn and improve by simply talking to your agents

- OpenClaw uses a training objective based on a standard PPO-style clipped surrogate (covered later in this module).

OpenClaw: moltbook & ClawHub

OpenClaw has experienced record-breaking growth since its launch in November 2025

- OpenClaw is frequently used to write agents which run on moltbook

- ClawHub is a forum where you register and discover new skills.

OpenClaw: Sequential Markov Decision Problem (MDP)

OpenClaw frames agent as a sequential, Markov Decision Problem:

- state = current conversational / tool context

- action = generated response or tool-use step

- transition = what happens next in the environment

- reward = inferred from the resulting next state

OpenClaw reframes ordinary agent interaction as an MDP-like loop - rather than relying only on static preference datasets, it learns from what actually happens after the model acts.

OpenClaw: RL Techniques

Key RL idea: learn from experience, not just offline data. It utilises two kinds of signal from the next state:

- evaluative signal: how good the action was

- directive signal: how the action should be improved

OpenClaw’s emphasises is on a fully asynchronous setup:

- servers,

- rollout collection,

- judging / reward estimation, and

- training

all run concurrently

OpenClaw: RL Techniques (continued)

It connects LLM agents back to standard RL ideas

- but in open-ended, tool-using, partially observed environments

Limitation:

- reward estimation is indirect and depends on judges / heuristics

- stability and safety rely on multi-agent architecture techniques

OpenClaw uses tools, shells, and GUIs.

Example: MiniMax / Forge: large-scale RL for agents

MiniMax is an RL framework for training agentic foundation models at scale.

- Associated with MiniMax M2.5 is a mixture of experts (MoE) foundation model trained across many real-world task environments

- Forge is the RL framework/infrastructure, the trainer, specialises in connecting models with live environments (terminal, browsers, code repositories etc.)

Main concern: scaling RL while balancing three competing goals:

- throughput

- stability

- agent flexibility

MiniMax / Forge: large-scale RL for agents (continued)

The emphasis is less on a single elegant RL algorithm, and more on:

- scalable infrastructure

- asynchronous scheduling

- efficient rollout + training pipelines

- composite reward design

MiniMax / Forge: why it matters for RL

Reinforcement learning is being pushed into messy, long-horizon productivity tasks:

- coding

- search

- office workflows

- tool use

Key insight:

- frontier RL is increasingly about training agents in many realistic environments

MiniMax can be used by no-code agent platforms such as MindStudio

RL can optimise task decomposition and tool-use policies for MiniMax / Forge

- rewards may be composite, delayed, noisy, and environment-specific

- scaling requires attention to off-policy effects, variance, and system design

Comparison with classical RL:

- same basic loop: act \(\rightarrow\) observe \(\rightarrow\) evaluate \(\rightarrow\) update

- different regime: huge contexts, tool chains, heterogeneous tasks, expensive rollouts

Planning & RL for Transformer Models

Planning & RL techniques for Transformers?

Sequential decision-making is on the ascendant:

RL, model-based control, and planning-like reasoning are central to agents, robotics, and tools using tensor flow (transformer) architectures.

So “planning” and “RL” must live inside parallel, scalable neural network systems.

Limiting assumptions:

- Classical planning & Reinforcement Learning’s typical teaching setup (fully observable, deterministic, stationary, discrete) mismatches many modern AI settings.

Planning & RL techniques for Transformers (continued)

Compute & tooling:

Tree search doesn’t map cleanly onto GPU/TPU throughput the way dense tensor operators do

Differentiability matters for end-to-end training, credit assignment, and integration with deep stacks

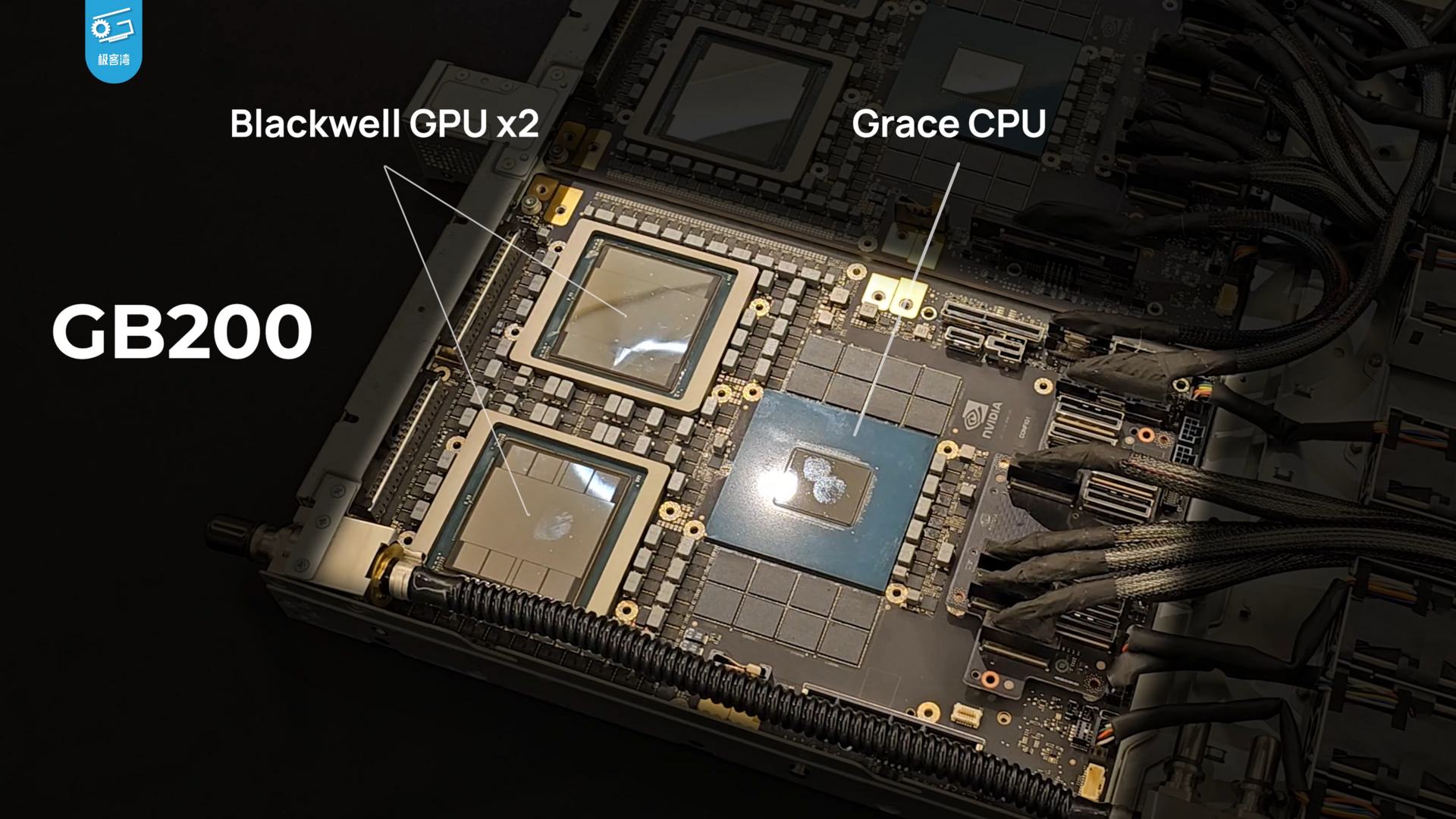

Question: What is required to run RL & Planning GPUs?

Nvidia’s liquid cooled GB200 Grace Blackwell (Tensor Core) Superchip can connect up to 576 of Blackwell GPUs in a single domain with over 1 PB/s total bandwidth (image by 极客湾Geekerwan, CC BY 3.0, Link)

What is required to run RL & Planning on a GPU?

- During training?

- At inference (query time)?

Value & Policy Approximation: Soft Actor-Critic (SAC)

Soft Actor-Critic (SAC): Differentiable Planning

Greedy next-step choice using max

- defines the Bellman optimality operator used in Q-learning/DQN. \[ Q^*(s,a) = r(s,a) + \gamma \,\mathbb{E}_{s'\sim P}\!\left[\max_{a'} Q^*(s',a')\right] \]

Soft (Entropy-Regularized) Bellman Backup - “softmax” \[ \begin{align*} Q_{\text{soft}}(s,a) & = r(s,a) + \gamma \,\mathbb{E}_{s'\sim P}\!\left[ V_{\text{soft}}(s')\right] \quad \\[0pt] V_{\text{soft}}(s) & = \mathbb{E}_{a'\sim \pi(\cdot|s)}\!\left[ Q_{\text{soft}}(s,a') - \alpha \log \pi(a'|s)\right] \end{align*} \]

Adds entropy bonus (temperature \(\alpha\)) ⇒ softens the hard max.

As \(\alpha\!\to\!0\): \(V_{\text{soft}}(s)\!\to\!\max_{a'} Q(s,a')\) (recovers hard backup).

Soft Actor-Critic (SAC): Implementation note

A min over two critics, \(\min\{Q_{\theta_1},Q_{\theta_2}\}\), is often used to reduce overestimation bias (Double-Q trick), not as the backup operator.

- This relaxation allows gradients to flow through the planning step.

Example: Table Tennis Player using Soft Actor-Critic (SAC)

Sony-AI’s Ace player is trained entirely on single shots in simulation using the Soft Actor-Critic (SAC) algorithm to return ball with desired skill, beating elite players.

- Durr et al., (2026) Outplaying elite table tennis players with an autonomous robot, Nature https://www.nature.com/articles/s41586-026-10338-5

Imagination & Latent Rollouts: STORM

STORM (Stochastic Transformer World Models): Atari

Stochastic Transformer-based wORld Model (STORM) targets the Atari 100k benchmark games, like MuZero.

STORM: How it works

A world model-based RL method for learning in imagination (simulation inside a learned world model)

It combines:

- Categorical Variational Autoencoder (VAE): Encodes raw images into discrete latent “tokens.”

- Transformer Dynamics: An attention-based sequence model that predicts future states (imagination).

- Stochastic Latent Variable: Accounts for environmental uncertainty and randomness.

- Actor-Critic Agent: A separate neural network learns the optimal policy by “practising” entirely within the Transformer’s imagined rollouts.

STORM: Imagination & Latent Rollouts

Imagination: Latent rollouts in STORM

- Start: Use a latent token (from the VAE) as the initial “imagined” state.

- Action: The Actor selects an action for this state.

- Dynamics: The Transformer predicts a stochastic (probabilistic) distribution for the next state and reward.

- Judgement: The Critic estimates the long-term value of this new state.

- Loop: Sample a token from the distribution and feed it back into Step 2

Repeating the steps in imagination forms a trajectory.

This allows the agent to learn a policy (how to act) or a value function (how “good” a state is) purely by practising inside its own “head”.

Imagination is technically known as latent rollouts, a widely accepted approach for generative model-based reinforcement learning.

STORM: Motivation

Transformers are strong at long-range sequence modelling.

Stochastic latents help capture uncertainty and non-determinism

- i.e. a stochastic latent says, given this past, there may be several plausible next hidden states

STORM achieves 126.7% mean human-normalised score on the Atari 100k benchmark, a state-of-the-art result among methods without look-ahead search.

MuZero branches over actions with MCTS, whereas STORM rolls forward imagined latent sequences in a transformer world model

- This makes STORM more GPU-friendly and more naturally aligned with end-to-end differentiable learning.

Comparison: MuZero versus STORM

| Aspect | MuZero | STORM |

|---|---|---|

| Core idea | Combines learning + Monte-Carlo Tree Search (MCTS) in latent space | Learns a stochastic transformer world model and performs imagination rollouts |

| Planning form | Expands a search tree: (s_0 s_1, s_2, ) | Rolls out a latent sequence using a transformer world model: (z_t z_{t+1} z_{t+2} ) |

| Model components | Representation (h(o_t)), Dynamics (g(s,a)), Prediction (f(s)) | Tokenizer / latent encoder, stochastic transformer dynamics model, reward/value/policy heads |

| Computation | Search-based, often CPU-heavy, not end-to-end differentiable through search | GPU-friendly, transformer-based, trained through batched imagined trajectories |

| Learning loop | Tree search generates improved policies; network distils them via supervised losses | Actor–critic trained on imagined trajectories generated by the transformer world model |

| Search structure | Discrete branching, value backups | Sequential imagination, typically no explicit branching tree search |

| Output policy | Derived from visit counts in the search tree | Learned directly through gradient updates from imagined rollouts |

| Analogy | “Plan by explicit search” | “Learn through transformer imagination” |

Policy Gradient: Proximal Policy Optimisation (PPO)

PPO Fine tuning ChatGPT & human feedback (Revisited)

- Proximal policy optimisation (PPO) is used by GPT-3.5 onwards

PPO in Agentic AI

PPO is becoming popular for Agentic AI modes, and is used in

- GPT’s Operator, and

- Claude’s Computer Use modes

PPO is also becoming popular for World Models in robotics, and is used in

- Vision, Language & Action (VSA) attention-based transformers

- Robot RT-X transformers

Proximal Policy Optimisation (PPO) for LLMs

PPO treats the LLM as a policy over tokens and updates it using reward feedback.

The purpose is to make better responses more likely, but without letting the model drift too far in a single update. This makes reinforcement-learning-based fine-tuning much more stable.

- generate responses

- score them with a reward model or preference signal

- update the model toward higher-reward outputs

- constrain the update so the change remains small

PPO is a stability-oriented way to nudge an LLM toward higher-reward behaviour.

Proximal Policy Optimisation (PPO) for LLMs (continued)

Goal: Stable, sample-efficient policy improvement

Idea: Constrain how far the new policy moves from the old one at each update during actor-critic cycle

Policy Objective Functions (Revisited)

From a policy gradient perspective, the true objective function \(J^{{\pi}_{\theta}}(\theta)\) over policy \(\pi_{\theta}\) is defined as follows

\[ J^{{\pi}_{\theta}}(\theta) = \mathbb{E}_{\tau \sim \pi_{\theta}} [G_0]=V^{\pi \theta}(d_0) \]

where \(d_0\) is the distribution over initial states at the start of each episode \(\tau\)

However, there are two problems in the actor-critic setting:

1. Computing \(J^{{\pi}_{\theta}}(\theta)\) exactly would require integrating over all trajectories, \(\tau\), of the current policy, which is impractical

2. If we update the parameters \(\theta\), it will effect objective value during the optimisation process, leading to (circular) feedback

We therefore need a surrogate objective independent of the trajectory distribution under the new policy \(\color{blue}{\pi_{\theta}}\) we are building

Surrogate Objective

From the policy-gradient theorem, we can define the importance ratio

\[ r_t(\theta) \;=\; \frac{\pi_\theta(a_t\mid s_t)}{\pi_{\theta_{\text{old}}}(a_t\mid s_t)} \qquad \]

We now define the surrogate objective \(L_{PG}\) for the true objective

\[ L_{PG}(\theta) \;=\; \mathbb{E}_t\!\big[\, r_t(\theta)\,\hat A_t \,\big] \]

Where \(\hat{A}_t\) captures how much better action \(a_t\) was than the state’s average

\(\hat{A}_t\) is an estimator of the true advantage function \(A^{\pi}\)

\(A^{\pi}(s_t,a_t) = Q^{\pi}(s_t, a_t) - V^{\pi}(S_t)\)

Clipped Surrogate (Core Idea)

Kullback-Leibler (KL) divergence theory tells us we want improvement without overly large steps in policy space, so we define

\[ L^{\text{CLIP}}(\theta) \;=\; \mathbb{E}_t\! \left[ \min\!\Big( r_t(\theta)\,\hat A_t,\; \mathrm{clip}\!\big(r_t(\theta),\,1-\epsilon,\,1+\epsilon\big)\,\hat A_t \Big) \right] \]

If \(r_t(\theta)\) leaves the interval \([1-\epsilon,\,1+\epsilon]\), the objective is clipped.

Typical range for \(\epsilon \in [0.1,\,0.2]\).

Prevents destructive updates while preserving ascent direction

The clipped surrogate objective in PPO plays a similar stabilising role to compatible function approximation — both constrain policy updates so that gradient estimates remain accurate and unbiased with respect to the true policy improvement direction.

Complete PPO loss

\[ L^{\text{PPO}}(\theta) = \mathbb{E}_t\!\Big[{\color{blue}{L^{\text{CLIP}}(\theta)}} - c_1\,{\color{red}{\big(V_\theta(s_t)-V_t^{\text{target}}\big)^2}} + c_2\,\mathcal{H}\!\left[\pi_\theta(\cdot\mid s_t)\right] \Big] \] \(\;\;\;\;\;\;\;\;\;\;\;\;\;\;\)where \(c_1, c_2\) are coefficients

The actors policy gradient (surrogate objective) is \(\color{blue}{L^{CLIP}(\theta)}\)

- This encourages the policy to increase the probability of actions with positive advantage and decrease it for negative ones

The critics value function is \(\color{red}{\big(V_\theta(s_t)-V_t^{\text{target}}\big)^2}\)

- This trains the network to predict correct returns (mean-squared error).

PPO Entropy bonus

The entropy bonus \(\mathcal{H}\) encourages exploration

\[ \mathcal{H}\big[\pi_\theta(\cdot \mid s_t)\big] = - \sum_{a} \pi_\theta(a \mid s_t) \log \pi_\theta(a \mid s_t) \]

The entropy term encourages exploration by rewarding stochastic (uncertain) policies.

It’s high when the policy is uncertain or “spread out” (exploratory).

It’s low when the policy is confident or deterministic.

The dot “\(\cdot\)” in \(\pi_{\theta}(\cdot | s_t)\) means over all possible actions, i.e. the vector of probabilities \(\pi_{\theta}(a_1,s_t), \pi_{\theta}(a_2,s_t), \ldots\)

In practice this maintains stochasticity until policy becomes more confident or deterministic.

Generalised Advantage Estimation (GAE)

In practice, PPO uses a low-variance, low-bias estimate of the advantage \(A^\pi(s_t,a_t)\).

TD error: \[ \delta_t \;=\; r_t + \gamma\,V_\phi(s_{t+1}) - V_\phi(s_t) \]

GAE-\(\lambda\): \[ \hat A_t^{(\lambda)} \;=\; \sum_{l=0}^{\infty} (\gamma\lambda)^l\,\delta_{t+l} \;=\; \delta_t + \gamma\lambda\,\delta_{t+1} + (\gamma\lambda)^2\,\delta_{t+2} + \cdots \]

Return/target used for critic \[ \hat V_t^{\text{target}} \;=\; \hat A_t^{(\lambda)} + V_\phi(s_t) \]

- \(\lambda\in[0,1]\) trades bias \(\leftrightarrow\) variance, typical PPO: \(\gamma \approx 0.99\), \(\lambda \approx 0.95\).

PPO Algorithm

PPO Algorithm

Repeat

\(\;\;\;\) Collect trajectories with \(\pi_{\theta_{\text{old}}}\)

\(\;\;\;\) Compute returns and advantages using GAE-\(\lambda\)

\(\;\;\;\) Optimise \(L^{\text{CLIP}}\) for \(K\) epochs over mini-batches

\(\;\;\;\) Update old params: \(\theta_{\text{old}} \leftarrow \theta\)

Until a stop condition holds (e.g., total timesteps \(\geq T\), or moving-average return \(\geq R_{\text{target}}\), or max iterations reached)

- Multiple epochs over the same batch are okay because clipping limits drift

Why PPO works (intuition)

First-order solution method

- No additional constraint solving required which can introduce second-order effects

Trust-region-like behaviour via clipping

- Optimisation within trust-region involves only taking steps that stay within a region where local approximation is reliable (founded on KL information theory)

Robust across discrete/continuous control

- Strong baseline performance in practice

Policy Optimisation Techniques

DeepSeek’s R1 uses Group Relative Policy Optimization (GRPO), forming the core of the reasoning process, and operates over the reasoning token stream.

Shao et al. DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models, arXiv:2402.03300v3, pp1-30, 2024

DeepSeek-R1 uses a “pure RL” approach (DeepSeek-R1-Zero) that utilises self-supervised trial and error approach, without the need for human supervision, rather like MuZero.

GRPO can be used for knowledge distillation, where a student model mimics a teacher model via supervised fine-tuning (SFT). The “Teacher” model acts as the Reward Function.

Policy Optimisation Techniques (continued)

Direct Preference Optimisation (DPO)

Rafailov et al. Direct Preference Optimization: Your Language Model is Secretly a Reward Model , arXiv:2305.18290v3, pp1-27, 2023

While GRPO & PPO dominate online RL for reasoning, DPO remains the industry standard for offline preference alignment

Offline data must include high-quality datasets, i.e. chosen vs. rejected pairs.

Policy optimisation utilisation

| Foundation Model | RL/Query Optimiser | Example |

|---|---|---|

| Attention-based transformer / LLM | PPO, DPO, GRPO / CoT | GPT, Gemini, Claude, DeepSeek-R1 - Thinking, Programming, Operator and Computer Use modes; OpenClaw-RL etc. |

| Attention-based transformer / Agentic & Vision + Language + Action (VLA) | PPO | OpenClaw-RL, MiniMax, VLA, RT-X, |

Chain of thought (CoT)

Multi Actor-Critic: IMPALA & V-trace

Distributed RL: IMPALA & V-trace

Importance Weighted Actor-Learner Architecture (IMPALA)

In production in DeepMind, OpenAI & Google DeepRL

Decoupled actor-learner: many CPU actors generate trajectories under behaviour policy \(\mu\); a central GPU learner updates \(\pi\).

High throughput via batched unrolls (e.g., length (n)); supports RNNs (LSTM) and multi-task.

Challenge: policy lag \(\rightarrow\) off-policy data.

Solution: V-trace targets for stable off-policy learning.

Off-policy with correction that handles policy lag without sacrificing throughput

IMPALA was developed at DeepMind

Distributed RL: V-trace essentials

Let importance ratios \(\displaystyle \rho_t=\min\!\left(\bar{\rho}, \frac{\pi(a_t|x_t)}{\mu(a_t|x_t)}\right)\)

\(\displaystyle c_t=\min\!\left(\bar{c}, \frac{\pi(a_t|x_t)}{\mu(a_t|x_t)}\right)\) with \(\bar{\rho}\ge \bar{c}\)

Value target (per time \(s\))

\[

\delta_t^{V} \;=\; \rho_t\Big(r_t + \gamma\,V(x_{t+1}) - V(x_t)\Big),\;\;v_s \;=\; V(x_s) \;+\; \sum_{t=s}^{s+n-1} \gamma^{\,t-s}

\!\left(\prod_{i=s}^{t-1} c_i\right)\! \delta_t^{V}

\]

Policy gradient with V-trace advantage

\[

A_t^{\text{V-trace}} \;=\; r_t + \gamma\,v_{t+1} - V(x_t), \qquad

\nabla_\theta J \;\propto\; \rho_t\,\nabla_\theta \log \pi_\theta(a_t|x_t)\,A_t^{\text{V-trace}}

\]

Loss (typical)

\[

\mathcal{L} \;=\; \underbrace{\mathbb{E}\big[(v_s - V(x_s))^2\big]}_{\text{value}}

\;-\; \beta\,\underbrace{\mathbb{E}\big[\rho_t \log \pi(a_t|x_t)\,A_t^{\text{V-trace}}\big]}_{\text{policy}}

\;-\; \eta\,\underbrace{\mathbb{E}\big[\mathcal{H}(\pi(\cdot|x_t))\big]}_{\text{entropy}}

\]

Why it works

Clipped IS ratios \((\rho_t, c_t)\) tame variance/bias;

Multi-step correction handles policy lag without sacrificing throughput.

Representative efficient actor-critic methods

| Category | Example algorithms | Key strengths |

|---|---|---|

| On-policy | PPO | Stable, parallelizable, easy; standard in LLM fine-tuning (RLHF) |

| Off-policy (stochastic) | SAC | Maximum-entropy objective → robust exploration; excellent data efficiency |

| Distributed | IMPALA, V-trace | Massive scalability; production in DeepMind, OpenAI, Google DeepRL |

Efficiency and Performance Comparison

| Dimension | MuZero / Sampled MuZero / EfficientZero | PPO / SAC / IMPALA |

|---|---|---|

| Sample efficiency | Excellent when planning can reuse a model (Atari, board games) | High for off-policy (SAC); moderate for PPO |

| Wall-clock / GPU efficiency | Poor (search is serial & CPU-bound) | Very good (fully parallel on GPU) |

| Robustness & stability | Sensitive to model errors / rollout length | Stable with tuned hyper-parameters |

| Scalability to real-time tasks | Hard (search latency) | Good; used in robotics, continuous control, large-scale RL (IMPALA, V-trace) |

| Best-case performance | Outstanding in structured domains (Go, Atari) | State-of-the-art in most continuous-control and real environments |

Vision & Robot Transformers: ViTs & RT-X

Example: Vision Transformers (ViTs)

Since ~2020, attention-based Transformers have started competing and often surpassing CNNs in large-scale vision benchmarks.

Image \(\rightarrow\) patches \(\rightarrow\) tokens \(\rightarrow\) transformer

Patchify the image: split an image of size (HW C) into non-overlapping patches (PP).

Number of tokens \(N=\frac{HW}{P^2}\).Linear patch embedding: flatten each patch \(x_i\in\mathbb{R}^{(P^2C)}\) and project

\(z_i^0 = W_E x_i + b_E \in \mathbb{R}^D\).

(Often implemented as a conv with kernel/stride \(P\).)

Vision Transformers - Tokens and Transformer Encoding

Add a class token and positions: prepend \([\mathrm{CLS}]\) and add learnable positions

\(\tilde{z}_i^0 = z_i^0 + p_i\), with sequence \([\tilde{z}_{\text{CLS}}^0, \tilde{z}_1^0,\ldots,\tilde{z}_N^0]\).Transformer encoder stack (repeated (L) times):

\(\text{SA}(X) = \text{softmax}\!\left(\frac{QK^\top}{\sqrt{d_k}}\right)V\) with multi-head self-attention,

then MLP; both with residuals + layer norm.Prediction head: take the final \([\mathrm{CLS}]\) (or pool all tokens) \(\to\) linear head () class probs.

Note: smaller \(P\) \(\Rightarrow\) more tokens (detail ↑, cost ↑); larger \(P\) \(\Rightarrow\) fewer tokens (detail ↓, cost ↓).

Variants like Swin use local windows with shifts for scalability; ViT uses global attention.

Example: RT-X Robot Transformers

RT-X:

Increasingly transformers are also being used for robotics (e.g. RT-1, RT-2, RT-X Google DeepMind)

- large-scale imitation across many robots.

“RT-family” includes hybrid attention across vision, language, and control.

- They utilises Visual, Language, Action (VLA) transformers

References

Transformers: Vaswani et al. (2017), Attention Is All You Need NeurIPS 2017 arXiv:1706.03762

PPO: Schulman et al. (2017), Proximal Policy Optimization Algorithms arXiv:1707.06347

DPO: Rafailov et al. (2023), Direct Preference Optimization NeurIPS 2023 arXiv:2305.18290

GRPO: Shao et al. (2024), DeepSeekMath arXiv:2402.03300

DeepSeek-R1 Guo et al. (2025) DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning, Nature https://www.nature.com/articles/s41586-025-09422-z

IMPALA: Espeholt et al. (2018), IMPALA ICML 2018 arXiv:1802.01561

STORM: Zhang et al. (2023), STORM: Efficient Stochastic Transformer based World Models for Reinforcement Learning NeurIPS 2023 arXiv:2310.09615

OpenClaw-RL: Wang et al. (2026), OpenClaw-RL: Train Any Agent Simply by Talking arXiv:2603.10165

Ace (Sony-AI) Durr et al., (2026) Outplaying elite table tennis players with an autonomous robot, Nature https://www.nature.com/articles/s41586-026-10338-5

PDDL with LLMs Silver et al. (2024) Generalized planning in PDDL domains with pretrained large language models https://ojs.aaai.org/index.php/AAAI/article/view/30006