03 Heuristic Search

How to Relax Informally

Informal definition of Relaxation

Relaxation

Relaxation means simplifying a problem by taking the solution to a simpler problem as the heuristic estimate for the solution to the actual problem.

You have a problem, \(\mathcal{P}\), whose perfect heuristic \(h^*\) you wish to estimate.

You define a simpler problem, \(\mathcal{P}^{\prime}\), whose perfect heuristic \(h^{\prime *}\) can be used to estimate \(h^*\).

You define a transformation, \(r\), that simplifies instances from \(\mathcal{P}\) into instances \(\mathcal{P}^{\prime}\).

Given \(\Pi \in \mathcal{P}\), you estimate heuristic \(h^*(\Pi)\) by computing heuristic \(h^{\prime *}(r(\Pi))\).

Route-Finding

Straight-line distances from Bucharest to

Relaxation in Route-Finding?

Straight-line distances from Bucharest to

How do we derive straight-line distances in route-finding by relaxation?:

- Problem \(\mathcal{P}\)?: Route finding.

- Simpler problem \(\mathcal{P}^{\prime}\)?:

- Route finding for birds.

- Perfect heuristic \(h^{\prime *}\) for \(\mathcal{P}^{\prime}\)?:

- Straight-line distance.

- Relaxation Transformation \(r\)?:

- Transform instances of \(\mathcal{P}\) into instances of \(\mathcal{P}^{\prime}\) by pretending you are a bird.

The 8-Puzzle

- Action: move(X,Y)

- Pre: blank(Y), adjacent(X,Y)

- Add: blank(X)

- Del: blank(Y)

Relaxation in the 8-Puzzle?

A perfect heuristic \(h^*\) for \(\mathcal{P}\): Actions = A tile can move from square \(X\) to square \(Y\) if \(X\) is adjacent to \(Y\), adjacent(X,Y), and \(Y\) is blank, i.e. blank(Y).

How do we derive the Manhattan distance heuristic?:

- \(\mathcal{P}^{\prime}\): Actions = A tile can move from square \(X\) to square \(Y\) if \(X\) is adjacent to \(Y\).

How do we derive a heuristic which counts the number of misplaced tiles?:

\(\mathcal{P}^{\prime}\): Actions = A tile can move from square \(X\) to square \(Y\).

\(h^{\prime *}\) (respectively \(r\)) in both: optimal cost in \(\mathcal{P}^{\prime}\) (respectively use different actions).

Here, Manhattan distance = 18 and misplaced tiles = 8.

Travelling Salesman Problem (TSP) in Australia

Propositions \(P\): \(\mathit{at}(X)\) for \(X \in \{\mathit{Sy}, \mathit{Ad}, \mathit{Br}, \mathit{Pe}, \mathit{Da}\}\); \(\mathit{v}(X)\) for \(x \in \{\mathit{Sy}, \mathit{Ad}, \mathit{Br}, \mathit{Pe}, \mathit{Da}\}\).

Actions \(a \in A\): \(\mathit{drive}(X,Y)\) where \(X,Y\) is a road; \(\mathit{pre}_a = \{\mathit{at}(X)\}\), \(\mathit{add}_a = \{\mathit{at}(Y), \mathit{v}(Y)\}\), \(\mathit{del}_a = \{\mathit{at}(X)\}\).

Initial state \(I\): \(\mathit{at}(\mathit{Sy}), \mathit{v}(\mathit{Sy})\).

Goal \(G\): \(\mathit{at}(\mathit{Sy}), \mathit{v}(X)\) for all \(x\).

“Goal-Counting” Relaxation for TSP in Australia?

Propositions \(P\): \(\mathit{at}(X)\) for \(X \in \{\mathit{Sy}, \mathit{Ad}, \mathit{Br}, \mathit{Pe}, \mathit{Da}\}\); \(\mathit{v}(X)\) for \(X \in \{\mathit{Sy}, \mathit{Ad}, \mathit{Br}, \mathit{Pe}, \mathit{Da}\}\).

Actions \(a \in A\): \(\mathit{drive}(X,Y)\) where \(X,Y\) is a road; \(\mathit{pre}_a = \{\mathit{at}(X)\}\), \(\mathit{add}_a = \{\mathit{at}(y), \mathit{v}(y)\}\), \(\mathit{del}_a = \{\mathit{at}(X)\}\).

Initial state \(I\): \(\mathit{at}(\mathit{Sy}), \mathit{v}(\mathit{Sy})\).

Goal \(G\): \(\mathit{at}(\mathit{Sy}), \mathit{v}(X)\) for all \(X\).

Let’s act as if we can achieve each goal directly:

Problem \(\mathcal{P}\): All STRIPS planning tasks; Simpler problem \(\mathcal{P}^{\prime}\): All STRIPS planning tasks with empty preconditions and deletes; Perfect heuristic \(h^{\prime *}\) for \(\mathcal{P}^{\prime}\): Optimal plan cost \((= h^{\prime *})\).

Transformation \(r\) ?:

- Drop the preconditions and deletes.

Heuristic value here?:

- 4 (since each applicable drive action can be treated as achieving one visit fact directly, and since deletes are ignored, a relaxed plan can achieve the goal in 4 actions).

How to Relax Formally

Relaxation: Definition for Planning

Definition: Relaxation

Let \(\mathcal{P}\) be a class of planning problems and \(h^*(\Pi)\) denote the optimal plan cost of problem \(\Pi \in \mathcal{P}\).

A relaxation is a triple \(\mathcal{R} = (\mathcal{P}', r, h'^*)\) where

- \(\mathcal{P}'\) is another class of planning problems,

- \(r : \mathcal{P} \to \mathcal{P}'\) is a transformation between the problems, and

- \(h'^*(\Pi')\) is the optimal plan cost of \(\Pi' \in \mathcal{P}'\), such that

for all \(\Pi \in \mathcal{P}\) \(\,\,{\color{blue}h^{\prime *}(r(\Pi)) \le h^*(\Pi)}\), and the heuristic induced by \(\mathcal{R}\) is \({\color{blue}\,h^{\mathcal R}(\Pi) = h^{\prime *}(r(\Pi))}\).

The relaxation is:

Native if \(\mathcal P' \subseteq \mathcal P\) and \(h^{\prime *} = h^*\), i.e. \(h^{\prime *}(\Pi') = h^*(\Pi')\) for all \(\Pi' \in \mathcal P'\);

Efficiently constructible if there exists a polynomial-time algorithm that, given \(\Pi \in \mathcal{P}\), computes \(r(\Pi)\);

Efficiently computable if there exists a polynomial-time algorithm that, given \(\Pi' \in \mathcal{P}^{\prime}\), computes \(h^{\prime *}(\Pi')\).

For example, the steps involved in goal counting:

You have a problem, \(\mathcal{P}\), whose perfect heuristic \(h^*\) you wish to estimate.

You define a simpler problem, \(\mathcal{P}^{\prime}\), whose perfect heuristic \(h^{\prime *}\) can be used to admissibly estimate \(h^*\).

You define a transformation, \(r\), from \(\mathcal{P}\) into \(\mathcal{P}^{\prime}\).

Given \(\Pi \in \mathcal{P}\), you estimate \(h^*(\Pi)\) by \(h^{\prime *}(r(\Pi))\).

Hence goal counting just approximates \(h^{\prime *}\) by number-of-false-goals (i.e. the number of goals remaining to be achieved).

Relaxations: Illustration (Example: Route-finding)

Problem \(\mathcal{P}\): Route finding

Simpler problem \(\mathcal{P}^{\prime}\):

- Route finding for birds

Perfect heuristic \(h^{\prime *}\) for \(\mathcal{P}^{\prime}\):

- Straight-line distance.

Transformation \(r\)?:

- Pretend you are a bird

Native Relaxations: Illustration (Example: Goal-counting)

Problem \(\mathcal{P}\): All STRIPS planning tasks.

Simpler problem \(\mathcal{P}^{\prime}\): All STRIPS planning tasks with empty preconditions and deletes

Perfect heuristic \(h^{\prime *}\) for \(\mathcal{P}^{\prime}\): Optimal plan cost \(= h^{*}\)

Transformation \(r\)?:

- Drop the preconditions and deletes.

Relaxation in Route-Finding: Properties?

Straight-line distances from Bucharest to

Assume relaxation \(\mathcal{R} = (\mathcal{P}^{\prime}, r, h^{\prime *})\): Straight-line distances (you are pretending to be a bird!)

Native?:

- No, birds don’t do route-finding (their flight is equivalent to trivial maps with direct routes between everywhere.)

Efficiently constructible?:

- Yes (pretend you are a bird).

Efficiently computable?:

- Yes (measure straight-line distance).

Relaxation in the 8-Puzzle: Properties?

Relaxation \(\mathcal{R} = (\mathcal{P}^{\prime}, r, h^{\prime *})\): Use more generous actions rule to obtain Manhattan distance.

Native?:

- No, with the modified rules, as it’s not the “same puzzle” anymore.

Efficiently constructible?:

- Yes (exchange action set).

Efficiently computable?:

- Yes (count misplaced tiles/sum up Manhattan distances).

How to Relax if not efficiently constructible or computable?

What if \(\mathcal{R}\) is not efficiently constructible?

Either (a) approximate \(r\), or (b) design \(r\) in a way so that it will typically be feasible, or (c) just live with it and hope for the best.

The vast majority of known relaxations (in planning) are efficiently constructible.

What if \(\mathcal{R}\) is not efficiently computable?

Either (a) approximate \(h^{\prime *}\), or (b) design \(h^{\prime *}\) in a way so that it will typically be feasible, or (c) just live with it and hope for the best.

Many known relaxations (in planning) are efficiently computable, some aren’t. The latter use (a); (b) and (c) are not used anywhere right now.

Goal-Counting Relaxation in TSP: Properties?

Propositions \(P\): \(\mathit{at}(X)\) for \(X \in \{\mathit{Sy}, \mathit{Ad}, \mathit{Br}, \mathit{Pe}, \mathit{Da}\}\); \(\mathit{v}(X)\) for \(X \in \{\mathit{Sy}, \mathit{Ad}, \mathit{Br}, \mathit{Pe}, \mathit{Da}\}\).

Actions \(a \in A\): \(\mathit{drive}(X,Y)\) where \(X,Y\) have a road; \(\mathit{pre}_a = \{\mathit{at}(X)\}\), \(\mathit{add}_a = \{\mathit{at}(y), \mathit{v}(Y)\}\), \(\mathit{del}_a = \{\mathit{at}(X)\}\).

Initial state \(I\): \(\mathit{at}(\mathit{Sy}), \mathit{v}(\mathit{Sy})\).

Goal \(G\): \(\mathit{at}(\mathit{Sy}), \mathit{v}(X)\) for all \(X\).

Relaxation \(\mathcal{R} = (\mathcal{P}^{\prime}, r, h^{\prime *})\): Remove preconditions and deletes, then use \(h^*\).

Native?:

- Yes: planning with empty preconditions and deletes is a special case of planning (i.e. a sub-class of ).

Efficiently constructible?:

- Yes: drop preconditions and deletes.

Efficiently computable?:

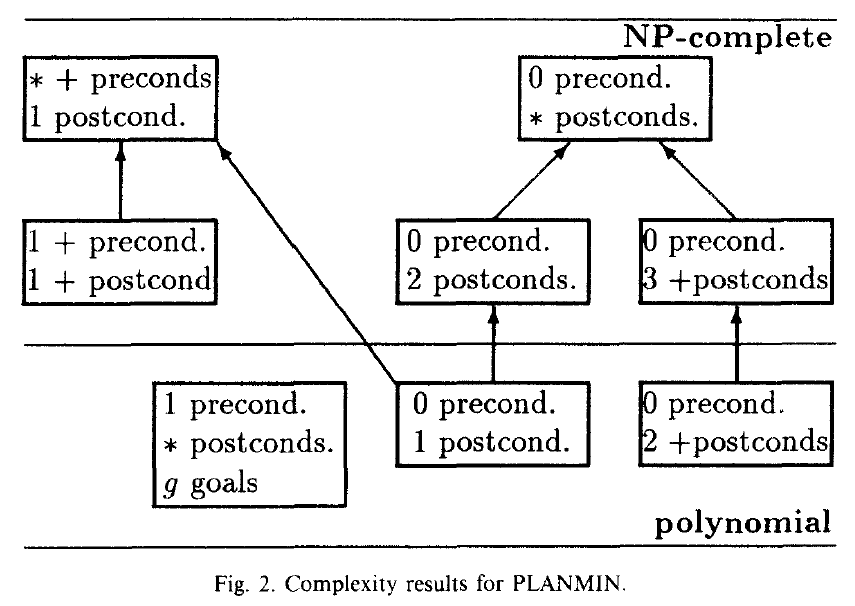

- No, Optimal relaxed planning is still \(\mathit{NP}\)-hard (MINIMUM COVER of goal set by add lists).

Even if the precondition \(at()\) is dropped, resulting in \(0\) preconditions, with the \(2\) postconditions \(at(Y)\) and \(v(Y)\)), the problem complexity is still NP-complete, i.e. not effectively computable.

*Figure from Bylander, 1994 The Computational Complexity of Propositional STRIPS Planning, Artificial Intelligence Journal, Volume 69, pp 165–204

What approach, (a), (b) or (c), do we take if not constructible and/or computable?:

- We take approach (a): approximate \(h^{\prime *}\) in \(\mathcal{P}^{\prime}\) by counting the number of goals not currently true.

How to Relax During Search

How to Relax During Search: Diagram

Using a relaxation \(\mathcal{R} = (\mathcal{P}^{\prime}, r,h^{\prime *})\) during search:

\(\Pi_s\): is problem \(\Pi\) with initial state replaced by \(s\)

- i.e., \(\Pi = (F,A,c,I,G)\) changed to \((F,A,c,s,G)\) (initial sate \(s\))

This is the task of finding a plan for search state \(s\)

- We will be using this notation in the course

How to Relax During Search: Logistics Problem

- Initial state \(I\): \(AC\)

- \(AC\) is the state encoding used here, that denotes that truck is at \(A\) and package is at \(C\). If the package is loaded on the truck it is at \(T\).

- Goal \(G\): \(AD\)

- The goal is for the truck to take package to D from C, then return to A

- Actions \(A\): \(drXY\) (drive from \(X\) to \(Y\)), \(loX\) (load package \(X\)), \(ulX\) (unload \(X\))

- Each action also has corresponding \(pre\), \(add\) & \(del\)

How to Relax During Search: Goal-Counting?

How to Relax During Search: Ignoring Deletes?

Conclusion

Robot Question

Question

Say we have a robot with one gripper, two rooms \(A\) and \(B\), and \(n\) balls we must transport.

- The actions available are \(moveXY\), \(pickB\) and \(dropB\); say \(h=\) “the number of balls not yet in room \(B\)”.

- Can \(h\) be derived as \(h^{\mathcal{R}}\) for a relaxation \(\mathcal{R}\)?

(A): No

(C): Sure, every admissible \(h\) can be derived via a relaxation.

(B): Yes, just drop the deletes

(D): I’d rather relax at the beach

Answer:

We can define \(\mathcal{P}^{\prime}\) as the problem of computing the cardinality of a finite set, and define \(r\) as the function that maps a state to the set of balls not yet in room \(B\). So: (A) is incorrect, (B) is incorrect, we should drop both preconditions and deletes.

(C): Yes. Admissibility of \(h^{\mathcal{R}}\) is the only strict requirement made by the definition.

Summary

Relaxation is a method to compute heuristic functions.

Given a problem \(\mathcal P\) we want to solve, we define a relaxed problem \(\mathcal P'\).

- The heuristic is obtained by mapping into \(\mathcal P'\) and taking the solution to this simpler problem as the estimate.

Relaxations can be native, efficiently constructible, and/or efficiently computable.

- None of these is a strict requirement for usefulness.

During search, the relaxation is used only inside heuristic computation for each state.

- It does not affect the rest of the search (This can be confusing, especially for native relaxations such as ignoring deletes.)

Goal Counting Approximation: Limitations

The goal-counting approximation, which is essentially \(h=\) “count the number of goals currently not true” is a very uninformative heuristic function:

The range of heuristic values is small \((0 \dots |G|)\).

We can transform any planning task into an equivalent one where \(h(s) = 1\) for all non-goal states \(s\).

How?:

- Replace goal by new fact \(g\) and add a new action achieving \(g\) with precondition \(G\).

Ignores almost all structure: heuristic value does not depend on the actions at all!

Is \(h\) safe/goal-aware/admissible/consistent?:

- Only safe and goal-aware

We will see how to compute better heuristic functions in the next Module.